The final OpenID Federation 1.0 specification was published today. This marks the end of a nearly decade-long journey and the beginning of new ones.

The final OpenID Federation 1.0 specification was published today. This marks the end of a nearly decade-long journey and the beginning of new ones.

At the 2016 TNC conference, Lucy Lynch challenged Roland Hedberg, saying “If there is someone who should be able to bring the eduGAIN identity federation into the new world of OpenID Connect, it is you.” That was the starting point for the work.

Originally, the specification was titled “OpenID Connect Federation 1.0” and the mission was exactly that – to enable multi-lateral federation when using OpenID Connect. Over time, we realized that the core trust establishment framework defined by the specification could be applied to any protocol and the spec was therefore renamed to “OpenID Federation 1.0”. Indeed, for a while, people had been clamoring to separate the protocol-independent trust establishment framework from the protocol-specific features for OpenID Connect and OAuth 2.0. I made that split after OpenID Federation 1.0 entered final review, and the resulting OpenID Federation 1.1 specifications also entered review for final status today.

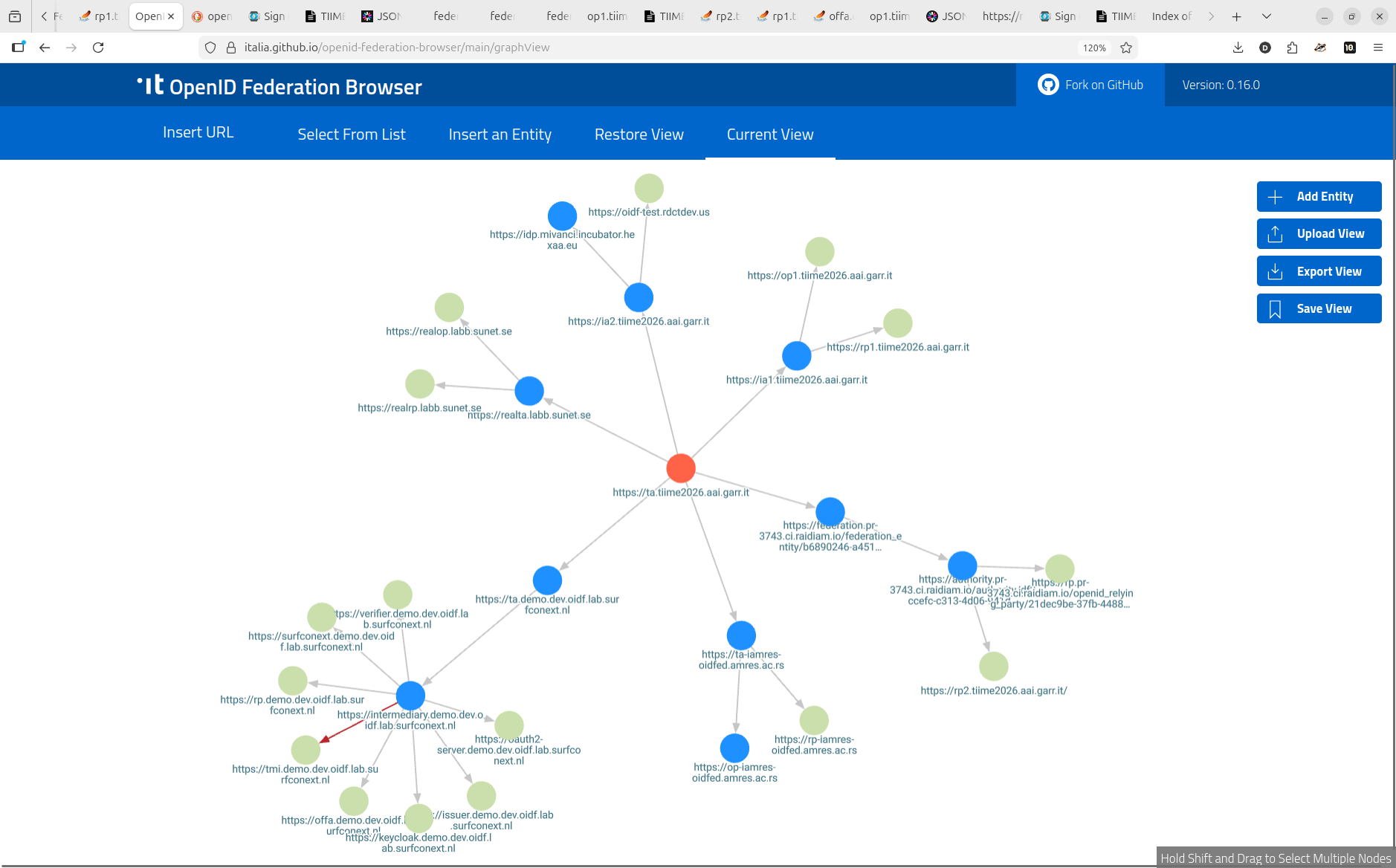

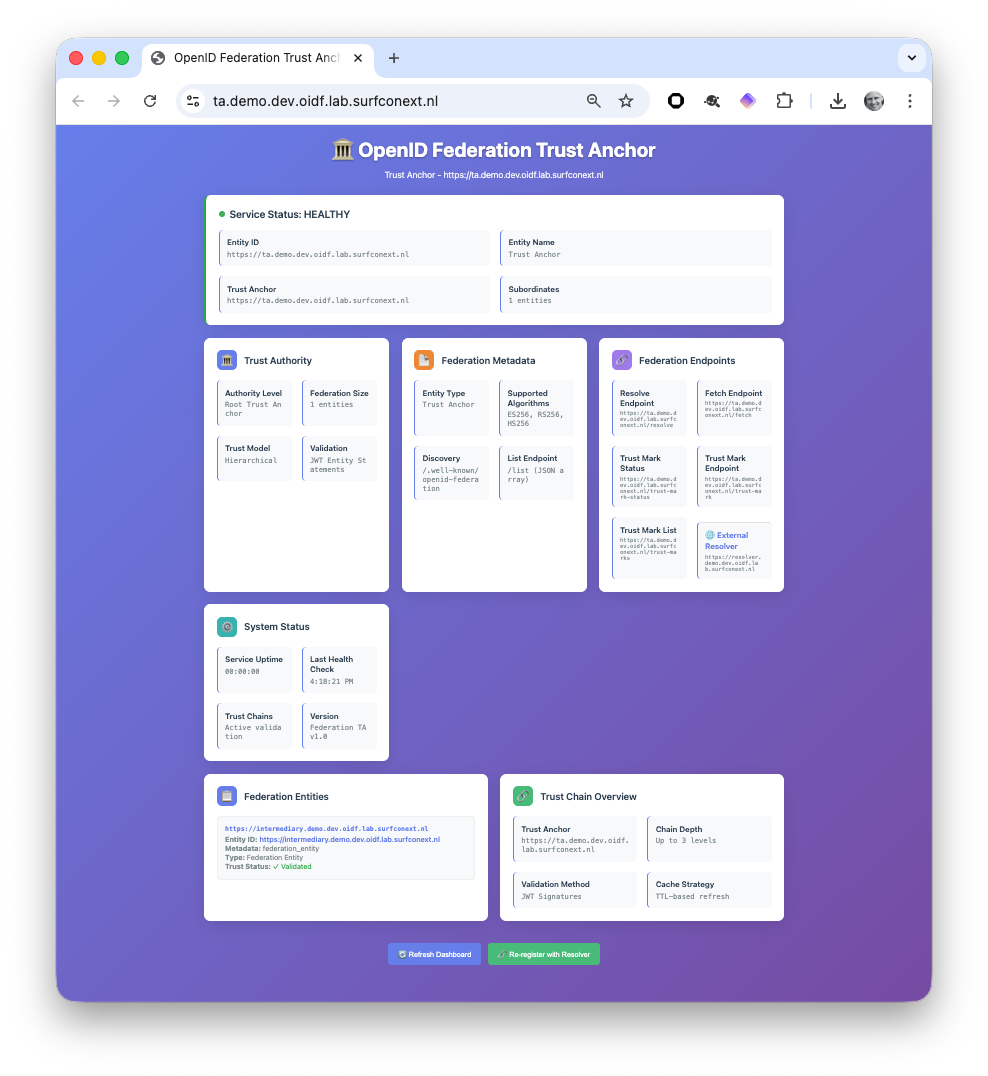

Like OpenID Connect, OpenID Federation benefited from multiple rounds of interop testing while it was being developed. Interops were held at NORDUnet 2017, SURFnet 2018, TNC/REFEDS 2019, Internet2/REFEDS 2019, three virtual interops in 2020, SUNET in 2025, and TIIME in 2026. Each time, we listened to the developer feedback and used it to improve the specification.

The early and enthusiastic support from the Research and Education community was foundational. They already knew what a multilateral federation is and why it’s useful. They patiently explained what they needed and why they needed it.

Many people contributed to the journey, but I want to call out the contributions of my co-authors in particular. Andreas Åkre Solberg was an early contributor and the inventor of Automatic Registration, which greatly simplifies deployments. John Bradley brought his practical security and deployment insights to the work. Giuseppe De Marco spearheaded production deployment for multiple Italian national federations and the Italian EUDI Wallet, informing the specification with real-world experience – particularly with the use of Trust Marks. Vladimir Dzhuvinov was an early implementer and brought his rigorous thinking about metadata operators and establishing trust to the effort.

Feedback from early implementations was critical to shaping the protocol. They included those by Authlete, Connect2ID, Raidiam, SimpleSamlPHP, DIGG, Sphereon, SPID/CIE in Italy, Shibboleth, GÉANT, SUNET, SURF, GRNET, eduGAIN/GARR, and of course Roland’s own implementation.

Demand for using OpenID Federation for protocols other than OpenID Connect and OAuth 2.0 informed our thinking as the specification developed. It is used for open finance in Australia. It is used for digital wallets in Italy. It is used for healthcare and national identity in Sweden. Each deployment brought insights to the effort that shaped the result for the better.

A team of security researchers at the University of Stuttgart performed a security analysis of the last implementer’s draft in 2024. They found an actionable security vulnerability applying to multiple protocols that we promptly fixed. Thanks to Dr. Ralf Küsters, Tim Würtele, and Pedram Hosseyni for their substantial contributions both to OpenID Federation and also to OpenID Connect, FAPI, and OAuth 2.0.

Multiple organizations played important roles in supporting this work. Special thanks to GÉANT, Connect2ID, and the SIROS Foundation for their significant financial support and encouragement. Multiple organizations hosted meetings at which significant discussions occurred, including NORDUnet, SUNET, SURF, GÉANT, and Internet2.

While this is the end of the journey for OpenID Federation 1.0, it is equally a step in important journeys under way. Multiple extensions to OpenID Federation are being developed, including OpenID Federation for Wallet Architectures 1.0 and OpenID Federation Extended Subordinate Listing 1.0. These provide important enhancements to the federation framework defined by the core specification needed for particular use cases.

Ecosystem building, adoption, and deployment is always a long journey and one we’re in the midst of. National use cases in Europe and Australia are leading the way.

I am confident that the inherent benefits of the scalable and modular OpenID Federation approach will continue to win adherents the world over. For instance, it is scalable and easily managed in a way that large-scale PKI trust bridges will never be.

Watch this space from more stories from these journeys as they develop!

Finally, my most significant thanks go to my friend and collaborator Roland Hedberg. He did the very hard thing – starting from a blank sheet of paper and on it creating a new, useful, and elegant invention. My sincerest congratulations, Roland! It’s been a privilege to be on this journey with you!